Containers are all the rage, they will solve all your problems. Hint of sarcasm there….Write once, run everywhere!? Ever heard statements like this? (*cough* java *cough). In my IT career, technology forecasters like to predict what will impact things. Technology disrupts itself every so many years.

Containers is a technology I’m personally excited about. My current role has me involved in cloud automation, including an interesting look at container technology. One of the items a private cloud should have is a private, trusted registry.

Regardless, if you use a public source code repository hosting your private registry, using Docker Enterprise or something else. Trust, is a BIG factor, with as many security breaches common place these days, security has to be on the forefront. I’m sure there are other options and if I’m missing something, feel free to contact me on twitter @steveschofield

VMware offers a free open source registry called Harbor . Anyone can setup and configure, either on-prem or hosted in public cloud. My blog post contains notes, config settings and my adventures along the way. My hope is you evaluate all options when evaluating a trusted registry for your company.

I don’t confess to be an expert knowing everything there is to know about setting up the right architecture supporting containers. When in doubt, start a proof of concept and evaluate various tools. This gives people an opportunity to geek and learn, which is why I enjoy the IT field.

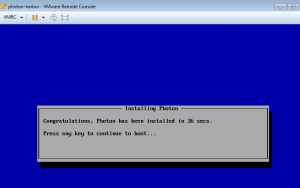

I used a Photon OS provided by VMware. It’s an OS offering docker engine built-in. Other OS’s can run Docker, I just chose for starters to help me learn more about the OS.

Download full ISO from

https://vmware.github.io/photon/

- Install photon minimal with partition big enough to store your images. Still have to figure how to use external storage so it can be expanded.

I learned DNS setting needs a space so systemd-resolved.service will properly configure the /etc/resolv.conf The /etc/resolv.conf is automatically managed by systemd-resolved.service.

https://github.com/vmware/photon/blob/master/docs/photon-admin-guide.md

#Add static networks

root@photon-harbor [ ~ ]# vi /etc/systemd/network/10-static-eth0.network

[Match]

Name=eth0

[Network]

Address=1.1.1.111/24

Gateway=1.1.1.1

DNS=1.2.3.4 5.6.7.8

Domains=example.com

#Upload or create Docker networking files

SCP upload docker network files in /etc/systemd/network

OR

Create file called 10-static-docker0.netdev in /etc/systemd/network

[NetDev]

Name=docker0

Kind=bridge

Create file called 10-static-docker0.network in /etc/systemd/network

[Match]

Name=docker0

[Network]

Address=192.168.3.0/24

#Change permissions on static ip

#chmod 644 /etc/system/network/*

root@photon-harbor [ /etc/systemd/network ]# ls -l

-rw-r-r- 1 root root 34 Dec 29 00:18 10-static-docker0.netdev

-rw-r-r- 1 root root 54 Dec 29 00:19 10-static-docker0.network

-rw-r-r- 1 root root 129 May 8 17:00 10-static-eth0.network#Add icmp at bottom of file

# Open /etc/systemd/scripts/iptables, add to bottom

# Add ability to response to icmp pings

iptables -A INPUT -p icmp -j ACCEPT

#Enable root login

vi /etc/ssh/ssh_config

PermitRootLogin yes

Restart services to apply changes from above

#restart ssh

systemctl restart sshd

#firewall

systemctl restart iptables

#restart network and dns daemons

systemctl restart systemd-networkd.service

systemctl restart systemd-resolved.service

Docker section

#Remove existing Docker install on Photon OS, which is version 1.1.x

root@photon-harbor [ /etc/systemd/network ]# tdnf erase docker

Removing:

docker x86_64 1.13.1-3.ph1 80.46 M

Total installed size: 80.46 M

Is this ok [y/N]:y

Testing transaction

Running transaction

Complete!

root@photon-harbor [ /etc/systemd/network ]#

#Install Docker Compose

curl -L https://github.com/docker/compose/releases/download/1.13.0/docker-compose-`uname -s`-`uname -m` > /usr/local/bin/docker-compose

chmod +x /usr/local/bin/docker-compose

#Install TAR and GZIP packages

tdnf install -y tar gzip

#Download latest version of Docker

#Run from command line

curl -fsSLO https://get.docker.com/builds/Linux/x86_64/docker-17.04.0-ce.tgz && tar -strip-components=1 -xvzf docker-17.04.0-ce.tgz -C /usr/bin

#Add user and automatic startup files.

============================================

groupadd -r docker

============================================

echo ‘[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network.target docker.socket

Requires=docker.socket

[Service]

Type=notify

ExecStart=/usr/bin/dockerd -H fd:// -s overlay2

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TasksMax=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

[Install]

WantedBy=multi-user.target

‘ > /etc/systemd/system/docker.service

============================================

echo ‘[Unit]

Description=Docker Socket for the API

PartOf=docker.service

[Socket]

ListenStream=/var/run/docker.sock

SocketMode=0660

SocketUser=root

SocketGroup=docker

[Install]

WantedBy=sockets.target

‘ > /etc/systemd/system/docker.socket

Reboot VM

Run these two commands after reboot of Photon OS

• systemctl enable docker

• systemctl start docker

Harbor Installation

https://github.com/vmware/harbor/blob/master/docs/installation_guide.md

#Upload / offline files (link to download is listed reference links)

• Create /var/opt/harbor

• chmod -R 777 /var/opt/harbor/

• Edit harbor.cfg (see raw config listed below)

• Run /var/opt/harbor/install.sh

#Create /etc/nginx folder and add sym link for nginx.conf

• root@photon-harbor [ /var/opt/harbor ]# mkdir /etc/ngnix

• root@photon-harbor [ /var/opt/harbor ]# ln -s /var/opt/harbor/common/config/nginx/nginx.conf /etc/ngnix

#Reference links, misc commands

• https://github.com/vmware/photon/blob/master/docs/photon-admin-guide.md#default-firewall-settings

• http://www.virten.net/2015/04/basic-commands-for-vmware-photon-and-docker/

• http://vmware.github.io/harbor/

• https://github.com/vmware/photon/issues/588

• https://github.com/moby/moby/issues/26237

Slack Channel on VMware Code

vmwarecode.slack.com (subscribe to the harbor channel)

Restart all docker containers

• docker restart $(docker ps -a -q)

• docker-compose up -d docker-compose.yml (to start up after a reboot)

Harbor.cfg

## Configuration file of Harbor

#The IP address or hostname to access admin UI and registry service.

#DO NOT use localhost or 127.0.0.1, because Harbor needs to be accessed by external clients.

hostname = harbor.example.com

#The protocol for accessing the UI and token/notification service, by default it is http.

#It can be set to https if ssl is enabled on nginx.

ui_url_protocol = http

#The password for the root user of mysql db, change this before any production use.

db_password = changeme

#Maximum number of job workers in job service

max_job_workers = 3

#Determine whether or not to generate certificate for the registry’s token.

#If the value is on, the prepare script creates new root cert and private key

#for generating token to access the registry. If the value is off the default key/cert will be used.

#This flag also controls the creation of the notary signer’s cert.

customize_crt = on

#The path of cert and key files for nginx, they are applied only the protocol is set to https

ssl_cert = /data/cert/server.crt

ssl_cert_key = /data/cert/server.key

#The path of secretkey storage

secretkey_path = /data

#Admiral’s url, comment this attribute, or set its value to NA when Harbor is standalone

admiral_url = NA

#NOTES: The properties between BEGIN INITIAL PROPERTIES and END INITIAL PROPERTIES

#only take effect in the first boot, the subsequent changes of these properties

#should be performed on web ui

#************************BEGIN INITIAL PROPERTIES************************

#Email account settings for sending out password resetting emails.

#Email server uses the given username and password to authenticate on TLS connections to host and act as identity.

#Identity left blank to act as username.

email_identity =

email_server = smarthost.example.com

email_server_port = 25

email_username =

email_password =

email_from = Harbor <[email protected]>

email_ssl = false

##The initial password of Harbor admin, only works for the first time when Harbor starts.

#It has no effect after the first launch of Harbor.

#Change the admin password from UI after launching Harbor.

harbor_admin_password = changeme

##By default the auth mode is db_auth, i.e. the credentials are stored in a local database.

#Set it to ldap_auth if you want to verify a user’s credentials against an LDAP server.

auth_mode = ldap_auth

#The url for an ldap endpoint.

ldap_url = ldap://ad-ldap.example.com

#A user’s DN who has the permission to search the LDAP/AD server.

#If your LDAP/AD server does not support anonymous search, you should configure this DN and ldap_search_pwd.

#ldap_searchdn = uid=searchuser,ou=people,dc=mydomain,dc=com

ldap_searchdn = CN=LDAPUser,OU=LDAP,DC=example,DC=com

#the password of the ldap_searchdn

#ldap_search_pwd = password

ldap_search_pwd = changeme

#The base DN from which to look up a user in LDAP/AD

ldap_basedn = dc=example,dc=com

#Search filter for LDAP/AD, make sure the syntax of the filter is correct.

ldap_filter = (objectClass=person)

# The attribute used in a search to match a user, it could be uid, cn, email, sAMAccountName or other attributes depending on your LDAP/AD

ldap_uid = sAMAccountName

#the scope to search for users, 1-LDAP_SCOPE_BASE, 2-LDAP_SCOPE_ONELEVEL, 3-LDAP_SCOPE_SUBTREE

ldap_scope = 3

#Timeout (in seconds) when connecting to an LDAP Server. The default value (and most reasonable) is 5 seconds.

ldap_timeout = 5

#Turn on or off the self-registration feature

self_registration = on

#The expiration time (in minute) of token created by token service, default is 30 minutes

token_expiration = 30

#The flag to control what users have permission to create projects

#The default value “everyone” allows everyone to creates a project.

#Set to “adminonly” so that only admin user can create project.

project_creation_restriction = everyone

#Determine whether the job service should verify the ssl cert when it connects to a remote registry.

#Set this flag to off when the remote registry uses a self-signed or untrusted certificate.

verify_remote_cert = on

#************************END INITIAL PROPERTIES************************

#############

This entire file is the docker-compose.yml file that ships with the harbor install, thanks to the Slack harbor channel, Sean as well as others from VMware, helped me get this up and going.

Docker-compose.yml – add bolded section to base docker-compose.yml file to have custom networks

version: ‘2’

services:

log:

image: vmware/harbor-log:v1.1.1-rc3

container_name: harbor-log

restart: always

volumes:

– /var/log/harbor/:/var/log/docker/:z

ports:

– 127.0.0.1:1514:514

networks:

– harbor

registry:

image: vmware/registry:photon-2.6.0

container_name: registry

restart: always

volumes:

– /data/registry:/storage:z

– ./common/config/registry/:/etc/registry/:z

networks:

– harbor

environment:

– GODEBUG=netdns=cgo

command:

[“serve”, “/etc/registry/config.yml”]

depends_on:

– log

logging:

driver: “syslog”

options:

syslog-address: “tcp://127.0.0.1:1514”

tag: “registry”

mysql:

image: vmware/harbor-db:v1.1.1-rc3

container_name: harbor-db

restart: always

volumes:

– /data/database:/var/lib/mysql:z

networks:

– harbor

env_file:

– ./common/config/db/env

depends_on:

– log

logging:

driver: “syslog”

options:

syslog-address: “tcp://127.0.0.1:1514”

tag: “mysql”

adminserver:

image: vmware/harbor-adminserver:v1.1.1-rc3

container_name: harbor-adminserver

env_file:

– ./common/config/adminserver/env

restart: always

volumes:

– /data/config/:/etc/adminserver/config/:z

– /data/secretkey:/etc/adminserver/key:z

– /data/:/data/:z

networks:

– harbor

depends_on:

– log

logging:

driver: “syslog”

options:

syslog-address: “tcp://127.0.0.1:1514”

tag: “adminserver”

ui:

image: vmware/harbor-ui:v1.1.1-rc3

container_name: harbor-ui

env_file:

– ./common/config/ui/env

restart: always

volumes:

– ./common/config/ui/app.conf:/etc/ui/app.conf:z

– ./common/config/ui/private_key.pem:/etc/ui/private_key.pem:z

– /data/secretkey:/etc/ui/key:z

– /data/ca_download/:/etc/ui/ca/:z

networks:

– harbor

depends_on:

– log

– adminserver

– registry

logging:

driver: “syslog”

options:

syslog-address: “tcp://127.0.0.1:1514”

tag: “ui”

jobservice:

image: vmware/harbor-jobservice:v1.1.1-rc3

container_name: harbor-jobservice

env_file:

– ./common/config/jobservice/env

restart: always

volumes:

– /data/job_logs:/var/log/jobs:z

– ./common/config/jobservice/app.conf:/etc/jobservice/app.conf:z

– /data/secretkey:/etc/jobservice/key:z

networks:

– harbor

depends_on:

– ui

– adminserver

logging:

driver: “syslog”

options:

syslog-address: “tcp://127.0.0.1:1514”

tag: “jobservice”

proxy:

image: vmware/nginx:1.11.5-patched

container_name: nginx

restart: always

volumes:

– ./common/config/nginx:/etc/nginx:z

networks:

– harbor

ports:

– 80:80

– 443:443

– 4443:4443

depends_on:

– mysql

– registry

– ui

– log

logging:

driver: “syslog”

options:

syslog-address: “tcp://127.0.0.1:1514”

tag: “proxy”

networks:

harbor:

external: false

driver: bridge

ipam:

driver: default

config:

– subnet: 192.168.150.0/20

gateway: 192.168.144.1

To-do’s

- Figure out notary sample

- How to host images on external storage.

- How to setup HTTPS

Enjoy

Steve Schofield

#vExpert 2017

http://vsteve.me